Machine learning has garnered a lot of attention in the past few years. The reason behind this might be the high amount of data from applications, the ever-increasing computational power, the development of better algorithms, and a deeper understanding of data science.

We have already talked about artificial intelligence (AI) in a previous blog post. In this opportunity, we will learn about machine learning, what it is and how it works with examples and ITSM applications.

It’s time to dive right into it with the most obvious question in everyone’s mind.

What exactly is Machine Learning?

In short, machine learning is a subfield of artificial intelligence (AI) in conjunction with data science. Machine learning generally aims to understand the structure of data and fit that data into models that can be understood and utilized by machine learning engineers and agents in different fields of work.

Let’s take a look at its history. In 1959, Arthur Samuel coined the term “machine learning.” He was an American pioneer in computer gaming and artificial intelligence. Back then, he assured us that it gave computers the ability to learn without being explicitly programmed.

And in 1997, Tom Mitchell gave a “well-posed” mathematical and relational definition: “A computer program is said to learn from experience (E) concerning some task (T) and some performance measure (P), if its performance on (T), as measured by (P), improves with experience (E),” he explained.

This sets the stage for our next big question.

How does Machine Learning work?

Although machine learning is a field within computer science and AI, it differs from traditional computational approaches. In traditional computing, algorithms are sets of explicitly programmed instructions used by computers to calculate or problem solve.

But things are a little different in machine learning because machine learning algorithms allow computers to train on data inputs and use statistical analysis to output values that fall within a specific range.

As a result, machine learning facilitates computers in building models from sample data to automate decision-making processes based on data inputs. A more complex version of this is called a neural network.

What are the different methods of Machine Learning?

Machine learning has tasks in broad categories. These categories come from the learning received or feedback given to the system developed.

Two of the most widely adopted machine learning methods are:

- Supervised learning, which trains algorithms based on example input and output data that humans label.

- Unsupervised learning, which provides the machine learning algorithm with no labeled data to allow it to find structure within its input data.

Let’s explore these methods in more detail.

Supervised learning

In supervised learning, a machine learning algorithm learns from example data and associated target responses that consist of numeric values or string labels (classes or tags). Then, it predicts the correct response.

This approach is similar to human learning under the supervision of a teacher. The teacher provides good examples for the student to memorize, and the student then derives general rules from these specific examples. For example, a commonly known machine learning algorithm based on supervised learning is called linear regression.

Unsupervised learning

In unsupervised learning, data is unlabeled, so the learning algorithm is left to find commonalities among its input data. As unlabeled data are more abundant than labeled data, machine learning methods that facilitate unsupervised learning are particularly valuable.

The goal of unsupervised learning may be as straightforward as discovering hidden patterns within a dataset. Still, it may also have the purpose of feature learning, which allows the computational machine to find the representations needed to classify raw data automatically.

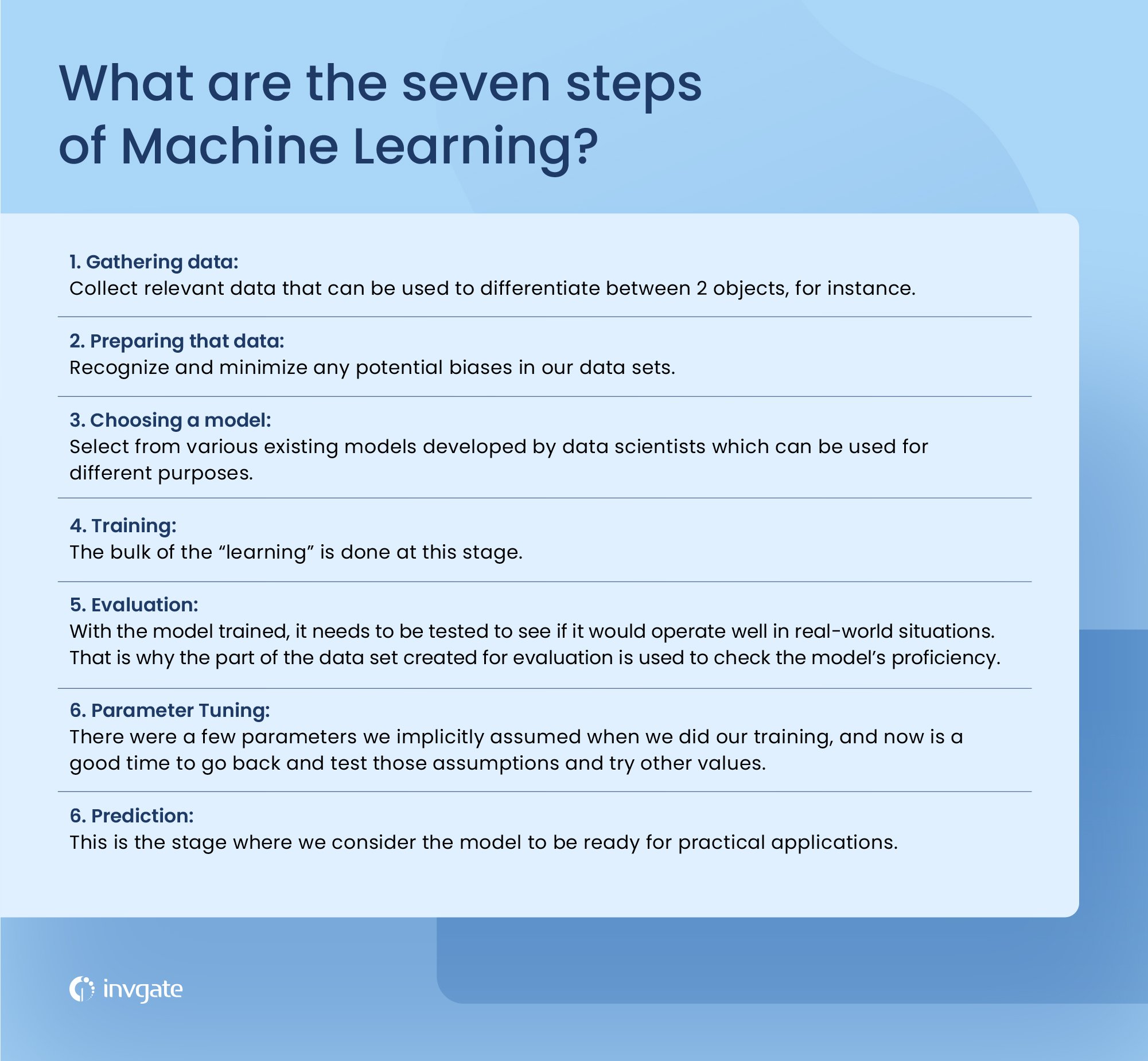

The seven steps of Machine Learning

1. Gathering data

Our first step would be to gather relevant data to differentiate between 2 cookies, for instance. It can use different parameters to classify a cookie as a chocolate chip cookie or a butter cookie.

For the sake of simplicity, we would only take 2 features that our model would utilize to perform its operation. The first feature will be if there are spots on the cookie, and the second one, the size of the cookie. We hope our model can accurately differentiate between the 2 cookies using these features.

Gathering data from online sources for the purpose of machine learning is simpler if you harness an API based scraping tool, and this is often the starting point for projects that don’t have the means to generate the necessary information internally.

2. Preparing that data

Once we have gathered the data for the two features, our next step would be to prepare data for further actions.

A key focus of this stage is to recognize and minimize any potential biases in our data sets for the 2 features. First, we would randomize the order of our data for the 2 cookies. We do not want the order to affect the model’s choices.

Well-prepared data for your model can improve its efficiency. Plus, it can help reduce the model's blind spots, which translates to greater accuracy of predictions.

3. Choosing a model

The model type selection is our next course of action once we are done with the data-centric steps. Data scientists have different purposes for various existing models.

These models have different goals in mind. For instance, some models are more suited to dealing with texts, while they may better equip others to handle images.

4. Training

At the core of the machine, the learning process is the model's training. The bulk of the “learning” is done at this stage. Here we use the part of the data set allocated for training to teach our model to differentiate between the 2 cookies.

5. Evaluation

With the model trained, it tests to see if it would operate well in real-world situations. That is why the part of the data set created for evaluation checks the model’s proficiency, leaving the model in a scenario where it encounters problems that were not a part of its training.

6. Parameter tuning

Once you’ve evaluated, you may want to see if you can further improve your training. We can do this by tuning our parameters. There were a few parameters we implicitly assumed when we did our training, and now is an excellent time to go back and test those assumptions and try other values.

7. Prediction

The final step of the machine learning process is prediction. It is the stage where we consider the model ready for practical applications. Our cookie model should now be able to answer whether the given cookie is a chocolate chip cookie or a butter cookie.

What is deep learning?

Deep learning is a machine learning technique that teaches computers to do what comes naturally to humans: learn by example. Deep learning is a crucial technology behind driverless cars, enabling them to recognize a stop sign or distinguish a pedestrian from a lamppost.

The key to voice control is in consumer devices like phones, tablets, TVs, and hands-free speakers. Deep learning is getting lots of attention lately, and for a good reason. It’s achieving results that were not possible before.

How is Machine Learning being used today?

Machine Learning can automate mundane tasks and offer intelligent insights. Industries in every sector try to benefit from it. You may already use a wearable fitness tracker like Fitbit or a smart home assistant like Google Home. Neural Networks often handle other, more complex tasks, such as:

- Prediction: machine learning predicts systems. For example, if you take a loan, the system will need to classify the available data to compute the probability of a fault.

- Image recognition: it's also used in facial recognition for images and videos. There is a separate category for each person in a database of several people.

- Speech Recognition: here, machine learning is used to translate spoken words into text. This is the case of software that converts audio into text, voice searches, and more. Voice user interfaces include voice dialing, call routing, and appliance control. It can also be a simple data entry and the preparation of structured documents — Natural Language Processing (NLP) in machine learning in charge of speech recognition.

- Medical diagnoses: machine learning has several medicinal uses; for example, it recognizes cancerous tissues.

- The financial industry and trading: lastly, companies use machine learning in fraud investigations and credit checks.

How to use Machine Learning in ITSM?

Like AI, machine learning applications can greatly aid ITSM and its proper implementation. Some include predictive analytics, demand planning, predictive maintenance, improved search capabilities, and intelligent autoresponders. Teams that invest in developing skills in machine learning are better equipped to implement, manage, and optimize these tools within their IT infrastructure.

Many of these functionalities are part of InvGate’s AI engine, Support Assist. Suppose you are looking to start harnessing the power of AI to boost your help desk capabilities. In that case, we encourage you to try it as it seamlessly integrates into your IT infrastructure, improving first response times and data accuracy for better routing and reporting.

Key takeaways

We want you to leave with the main takeaway that machine learning is here to stay. The result is often stunningly accurate whether its learning process is supervised or unsupervised. Its proper implementation can spell the end of tedious and cumbersome tasks, thus reducing the workload on agents and managers.

Frequently asked questions

What are the 4 basics of machine learning?

The 4 basics of machine learning are:

- Supervised Learning: Supervised learning applies when a machine has sample data, i.e., input and output data with correct labels. It checks the correct labels for the correctness of the model using some labels and tags. Additionally, supervised learning helps us predict future events with the help of experienced and labeled examples.

- Unsupervised Learning: In unsupervised learning, a machine is trained with some input samples or labels only, while output is not known. The training information is neither classified nor labeled; hence, a machine may not always provide the correct output compared to supervised learning.

- Reinforcement Learning: Reinforcement Learning is a feedback-based machine learning technique. Agents must explore the environment and perform actions based on their actions. Reinforcement learning is like training a puppy to do tricks in exchange for treats.

- Semi-supervised Learning: Semi-supervised Learning is an intermediate technique for both supervised and unsupervised learning. It performs actions on datasets having few labels as well as unlabeled data.

However, it generally contains unlabeled data. Hence, it also reduces the cost of the machine learning model as labels are costly, but they may have few tags for corporate purposes. Further, it also increases the accuracy and performance of the machine learning model.

What are the 7 stages of machine learning?

The 7 stages of machine learning are:

- Collecting Data

- Preparing the data

- Choosing a model

- Training the model

- Evaluating the model

- Parameter tuning

- Making predictions

What are the 3 parts of machine learning?

The three parts of machine learning are:

- Representation: how to represent knowledge. Examples include decision trees, rules, instances, graphical models, neural networks, support vector machines, model ensembles, and others.

- Evaluation: the way to evaluate candidate programs (hypotheses). Examples include accuracy, prediction and recall, squared error, likelihood, posterior probability, cost, margin, entropy k-L divergence, and others.

- Optimization: The search process is known as how candidate programs are generated—for example, combinatorial optimization, convex optimization, and constrained optimization.

What questions can machine learning answer?

Some questions that machine learning can answer if properly trained are:

How much or how many?

So, for forecasting or also called a regression test. How much money am I going to make next month in which district for one particular product? Carry out regression tests during the evaluation period of the machine learning system tests.

Which category?

What is the category for ‘X’ fall? An everyday use case would be for sentiment analysis to determine which type a tweet falls into within sentiment. If a tweet mentions your company, is it good or bad?

What group does this fall into?

It falls into a clustering algorithm. We’re looking at our customers or medical patients. How do they group? So, looking at their attributes and how they cluster together and fall into groups. This will allow us to consider how to approach our customers or patients.

Is this weird, or is something not normal?

An anomaly detection. A perfect scenario is if you’re using your credit card in a state other than where you reside, and your credit card company calls and says, this is weird. Should your card still be working?

What options should we take?

An option could be a recommendation model, and based on the history of the data, what is the best option going forward? A typical scenario would be a product recommendation on a website like Amazon.